Prompt Engineering: The Complete Technical Guide to Mastering Prompt Engineering in 2026

Prompt engineering is the discipline of designing, optimizing and structuring instructions for generative artificial intelligence models. Far more than a simple writing skill, it is a full-fledged technical field that combines linguistics, logic, cognitive psychology and understanding of language model architectures. This reference guide covers all the techniques, frameworks and tools that every prompt engineer must master, from beginner to expert level.

10 Best Practices for Prompt Engineering

These best practices, drawn from academic research and hands-on experience, form the foundation of professional prompt engineering practice.

- Start simple and iterate: don't try to put everything in the first prompt. Begin with a clear instruction, evaluate the result, then progressively add context, constraints and examples. Iteration is at the heart of the engineering process.

- Use explicit delimiters: clearly separate the different sections of the prompt with markers (```, ###, ---). This helps the model distinguish instructions from context, examples from real data, and reduces prompt injection risks.

- Specify the output format: always indicate how the response should be structured (JSON, Markdown, list, table). An explicit format eliminates ambiguity and enables direct integration into automated workflows.

- Include high-quality examples: examples (few-shot) are the most reliable way to communicate your expectations. Choose representative, varied and high-quality examples. Avoid ambiguous or contradictory examples.

- Define negative constraints: specifying what the AI should NOT do is just as important as what it should do. 'Do not include technical jargon', 'Do not exceed 200 words', 'Do not fabricate sources' are constraints that significantly improve quality.

- Test on edge cases: a good prompt must work not only on the nominal case but also on edge cases (unusual inputs, ambiguous cases, missing data). Systematically test with varied inputs to ensure robustness.

- Version and document prompts: treat prompts like code — version them, comment them, test them. An undocumented prompt is a lost prompt. Use tools like Promptfoo or git to track prompt evolution.

- Adapt the technique to the model: each model has its strengths and weaknesses. GPT-4 excels at reasoning, Claude at following long instructions and honesty, Gemini at multimodality. Adapt the prompting technique to the specifics of the model being used.

- Measure and optimize: define evaluation metrics (relevance, factuality, coherence, format) and systematically measure prompt performance. Without measurement, there is no improvement. Use both automated and human evaluations.

- Stay up to date: the field evolves extremely fast. New techniques, models and tools appear every week. Follow publications (arXiv, Anthropic/OpenAI/Google blogs), communities (Reddit, Discord, Twitter) and experiment continuously.

Career in Prompt Engineering: Profession, Skills and Salaries

Prompt engineering has become a genuine profession with its own requirements, opportunities and career prospects. Here's what you need to know to get started or advance.

- Prompt Engineer Profile — A prompt engineer combines skills in written communication, logic, understanding of AI systems and often programming. The best profiles come from varied backgrounds: linguists, developers, data scientists, technical writers, UX designers. The key is the ability to think in a structured way while understanding the nuances of language.

- Required Skills — Technical skills: understanding of LLM architectures, mastery of APIs (OpenAI, Anthropic, Google), Python basics, NLP and tokenization fundamentals. Cross-functional skills: excellent writing, critical thinking, ability to iterate quickly, experimental mindset. Domain skills: understanding of the application area (marketing, legal, finance, healthcare, etc.).

- Salary Levels (2025) — Junior (0-2 years): 35,000-55,000 EUR/year in France, $60K-$90K in the USA. Mid-level (2-5 years): 55,000-80,000 EUR, $90K-$150K. Senior/Lead: 80,000-120,000 EUR, $150K-$250K. Expert/Independent consultant: 500-1,500 EUR/day. Salaries vary significantly depending on the sector (finance and tech pay more), company size and location.

- Types of Positions — Prompt Engineer: designing and optimizing prompts for AI products. AI Content Strategist: prompting applied to marketing and content. LLM Application Developer: building applications based on prompts and agents. AI Solutions Architect: designing complete systems integrating prompting. AI Trainer: creating training and evaluation data for models.

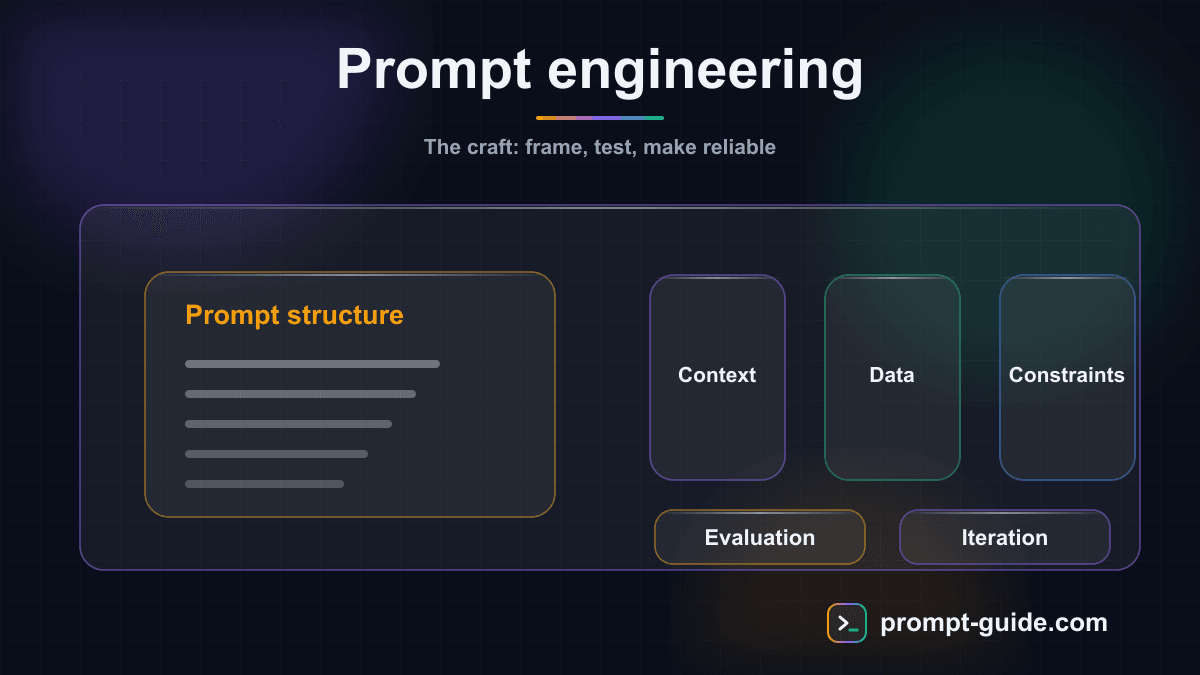

What Is Prompt Engineering? Technical Definition

Prompt engineering is the systematic process of designing instructions (prompts) that optimally leverage the capabilities of large language models (LLMs). Unlike traditional programming where deterministic code is written, prompt engineering operates in a probabilistic space: the same prompt can produce slightly different results each time it runs. This discipline encompasses understanding the internal mechanisms of LLMs (tokenization, attention, text generation), mastering different prompting strategies, and the ability to evaluate and iterate on results. Prompt engineering is distinguished from simple 'prompting' by its methodological rigor: it is an engineering approach with reproducible principles, evaluation metrics and systematic optimization processes.

Common Mistakes in Prompt Engineering

Avoiding these common pitfalls will save you time and significantly improve the quality of your results.

- Prompts that are too vague: 'Write something about marketing' never produces a good result. Always specify the precise topic, angle, format, tone and expected length.

- Information overload: injecting too much context or too many constraints hurts performance. The model can lose track or give too much importance to secondary details. Keep only relevant information.

- Ignoring temperature: temperature controls creativity vs. determinism. Temperature 0 for factual tasks (extraction, classification), 0.7-1.0 for creative tasks (brainstorming, writing). Don't use the same setting for everything.

- Not iterating: a perfect prompt on the first try is rare. Prompt engineering is an iterative process. Analyze errors, adjust instructions, test again. Each iteration brings you closer to the optimal result.

- Copy-pasting without adapting: a prompt that works for ChatGPT won't necessarily work the same way with Claude or Gemini. Each model has its own specifics and preferred formats.

- Neglecting evaluation: not testing your prompts on a representative set of cases is the main cause of poor performance in production. A prompt tested on a single example is not validated.

Prompt Engineering Frameworks

Frameworks provide reproducible structures for building effective prompts. Each framework has its strengths and suits different situations. Mastering these frameworks allows you to quickly create high-quality prompts without starting from scratch every time.

- RACE Framework — Role, Action, Context, Expectation. One of the most widely used frameworks. Role: Who is the AI in this interaction? Action: What should it do precisely? Context: What background information is relevant? Expectation: What result is expected (format, tone, length)? Example: Role: Digital marketing expert / Action: Write 5 advertising hooks / Context: Launch of a fitness app for seniors / Expectation: Hooks of 10 words max, warm and motivating tone.

- CO-STAR Framework — Context, Objective, Style, Tone, Audience, Response. A very comprehensive framework developed by GovTech Singapore. Context: background information. Objective: what you want to accomplish. Style: writing style (academic, journalistic, conversational). Tone: emotional attitude (enthusiastic, neutral, urgent). Audience: who the result is intended for. Response: output format. Ideal for content creation where style and tone are crucial.

- RODES Framework — Role, Objective, Details, Examples, Sense-check. A framework that integrates a verification step. Role: assigned expertise. Objective: precise goal. Details: constraints and specifications. Examples: examples of the expected result. Sense-check: asking the model to verify its own response before submitting it. The Sense-check step distinguishes this framework by adding an automatic quality control layer.

- RISEN Framework — Role, Instructions, Steps, End goal, Narrowing. Role: AI persona. Instructions: clear directives. Steps: steps to follow in order. End goal: measurable final objective. Narrowing: constraints to refine (what NOT to include, length limits, etc.). Particularly effective for procedural tasks and multi-step workflows.

- APE Framework (Automatic Prompt Engineer) — An automated approach proposed by Zhou et al. (2022). Instead of manually writing prompts, you ask the LLM to generate, evaluate and optimize its own prompts. Process: 1) Generate N candidate prompts, 2) Evaluate each prompt on a test set, 3) Select the best performer, 4) Iterate. This meta-technique illustrates that prompt engineering itself can be automated.

- CRISPE Framework — Capacity & Role, Insight, Statement, Personality, Experiment. Capacity & Role: AI capabilities and role. Insight: context and background. Statement: the precise request. Personality: style and tone. Experiment: request to generate multiple variations for comparison. Ideal for creative tasks where you want to explore several options before choosing.

The Evolution of Prompt Engineering: From GPT-2 to the Age of Agents

The history of prompt engineering is closely tied to that of language models. Understanding this evolution helps grasp why certain techniques work and how the field continues to transform.

2019-2020: The Beginnings with GPT-2 and GPT-3

The first works on prompt engineering emerged with GPT-2 (2019) and especially GPT-3 (2020). Researchers discovered that the wording of instructions radically influences model performance. The foundational paper by Brown et al. on 'few-shot learning' showed that GPT-3 could learn new tasks simply from examples in the prompt, without fine-tuning. This was the birth of the 'prompt-based learning' paradigm.

2021-2022: The Explosion of Techniques

Publication of foundational papers: Chain of Thought (Wei et al., 2022), Self-Consistency (Wang et al., 2022), Least-to-Most Prompting. InstructGPT and RLHF (Reinforcement Learning from Human Feedback) transformed how models respond to instructions. The arrival of ChatGPT in late 2022 massively democratized prompting for the general public.

2023: Professionalization

Prompt engineering became a recognized profession with dedicated job postings. Frameworks multiplied (RACE, CO-STAR, RODES, APE). Companies integrated prompt engineers into their teams. Models became multimodal (GPT-4V, Gemini) and prompting expanded to images, code and audio.

2024-2025: The Age of Agents and Reasoning

Techniques evolved toward agentic systems where LLMs orchestrate tools, make decisions and execute complex tasks end-to-end. Reasoning models (o1, o3, Claude with extended thinking) changed the game: some complex prompts became simpler because the model reasons on its own. Prompt engineering shifted toward designing systems and agent architectures rather than individual prompts.

Tools and Resources for Prompt Engineering

The prompt engineering ecosystem now has numerous tools to facilitate prompt creation, testing and optimization.

- Playgrounds and IDEs — Official playgrounds (OpenAI Playground, Anthropic Console, Google AI Studio) allow you to test prompts with precise parameter control (temperature, top_p, max_tokens). Specialized IDEs like PromptLayer, LangSmith or Promptfoo offer advanced features: prompt versioning, A/B testing, automatic evaluation and performance tracking.

- Development Frameworks — LangChain (Python/JS) and LlamaIndex are the most popular frameworks for building prompt-based applications. They provide abstractions for prompt chaining, RAG, agents and model orchestration. Semantic Kernel (Microsoft) and Haystack (deepset) are solid alternatives. For pure prompt engineering, Microsoft's Guidance library offers fine-grained control over generation.

- Evaluation Tools — Promptfoo allows you to systematically test prompts against test sets. RAGAS evaluates the quality of RAG systems. TruLens measures response fidelity, relevance and toxicity. These tools are essential for transitioning from artisanal prompting to a rigorous engineering approach with reproducible metrics.

- Prompt Libraries — Platforms like PromptBase, FlowGPT and ShareGPT offer thousands of community-tested and optimized prompts. The Art of Prompting provides templates, exercises and a prompt builder for methodical progress. These resources allow you to draw inspiration from what works rather than starting from scratch.

- Prompt Builders — No-code tools like The Art of Prompting's Prompt Builder, Promptly or PromptPerfect allow you to build structured prompts following proven frameworks without mastering the syntax. They guide users step by step through the components of a good prompt (role, context, instruction, format, constraints).

Resources for Learning Prompt Engineering

To deepen your knowledge of prompt engineering, here are the best resources available in 2025.

- Free Training — The Art of Prompting offers a complete, free training covering fundamentals, advanced techniques and practical applications. Andrew Ng's 'Prompt Engineering' course on DeepLearning.AI is a reference. Official documentation from Anthropic and OpenAI contains detailed prompting guides that are regularly updated.

- Essential Research Papers — Chain of Thought Prompting (Wei et al., 2022), Self-Consistency (Wang et al., 2022), Tree of Thought (Yao et al., 2023), ReAct (Yao et al., 2022), Automatic Prompt Engineer (Zhou et al., 2022), Constitutional AI (Bai et al., 2022). These foundational papers are freely available on arXiv and form the theoretical foundation of the field.

- Practice and Exercises — The best way to learn prompt engineering is to practice. The Art of Prompting's interactive exercises, FlowGPT community challenges, and public benchmarks like MMLU and HellaSwag allow you to continuously and measurably test and improve your skills.

- Communities and News — Reddit r/PromptEngineering and r/ChatGPT for discussions and technique sharing. Twitter/X to follow key researchers and practitioners. Official blogs from Anthropic, OpenAI and Google for announcements and guides. Newsletters like The Batch (Andrew Ng) and Ben's Bites to stay current on the ecosystem.

Advanced Prompt Engineering Techniques

These intermediate to expert-level techniques allow you to tackle complex problems, improve response reliability and leverage the reasoning capabilities of the latest models.

- Chain of Thought (CoT) — A technique published by Wei et al. (2022) that involves asking the model to 'think step by step' before giving its final answer. Dramatically improves performance on mathematical, logical and complex reasoning problems. Two main variants: explicit CoT ('Think step by step...') and Zero-shot CoT ('Let's think step by step'). Recent models (o1, o3) natively integrate this capability through 'extended thinking'.

- Tree of Thought (ToT) — An extension of Chain of Thought proposed by Yao et al. (2023). Instead of linear reasoning, the model explores multiple reasoning paths in parallel, evaluates each branch, and selects the best result. Particularly effective for planning problems, logic puzzles and strategic decisions. Implementation: ask the model to generate 3 different approaches, evaluate the pros/cons of each, then choose the best one.

- Self-Consistency — A technique by Wang et al. (2022) that involves generating multiple responses to the same prompt (with high temperature), then selecting the majority response. Significantly increases reliability on reasoning tasks. Principle: if multiple different reasoning paths arrive at the same conclusion, that conclusion is probably correct. Practical implementation: ask the model to solve the problem in 3 different ways and compare the results.

- ReAct (Reasoning + Acting) — A framework by Yao et al. (2022) that alternates between reasoning (Thought), action (Act) and observation (Obs). The model thinks about what it needs to do, executes an action (search, calculation, tool call), observes the result, then decides the next step. This is the foundation of modern AI agents. Example: Thought: I need to find the population of France → Act: search('population France 2025') → Obs: 68.4 million → Thought: Now I can answer...

- Retrieval-Augmented Generation (RAG) — A technique that enriches the prompt with information dynamically retrieved from an external knowledge base. The process: 1) the user's question is converted to a vector, 2) the most relevant documents are retrieved, 3) these documents are injected into the prompt context, 4) the model responds based on this factual information. Considerably reduces hallucinations and enables working with proprietary or recent data.

- Least-to-Most Prompting — A decomposition technique that involves asking the model to break down a complex problem into simpler sub-problems, then solving them in order from simplest to most complex. Each sub-answer feeds the context for the next one. Very effective for mathematical problems, project planning and multi-factor analyses.

- Generated Knowledge Prompting — Before answering a question, you first ask the model to generate relevant knowledge on the topic, then use that knowledge to formulate its response. Two steps: 1) 'Generate 5 important facts about [topic]' 2) 'Based on these facts, answer [question]'. Improves the factuality and depth of responses.

- Prompt Chaining — Breaking down a complex task into a series of sequential prompts where the output of each step feeds the next. Allows managing complex workflows while maintaining quality at each step. Example for an article: Prompt 1 (outline) → Prompt 2 (introduction) → Prompt 3 (sections) → Prompt 4 (conclusion) → Prompt 5 (revision). Each step can be validated and adjusted before moving to the next.

Fundamental Prompt Engineering Techniques

These techniques form the foundation of all prompt engineering practice. They are applicable to all language models and cover the majority of common use cases.

- Zero-shot Prompting — A technique where you give a direct instruction without providing any example. The model relies solely on its prior knowledge acquired during training. Effective for simple and well-defined tasks (translation, summarization, basic classification). Example: 'Classify the sentiment of this sentence as positive, negative or neutral: I love this product, it changed my life!' The model responds directly without needing prior examples.

- One-shot Prompting — You provide a single example before the actual request. This example serves as a template for the expected format, tone and structure. Particularly useful when the output format is specific or unusual. Example: 'Sentiment: I love this product → Positive / Sentiment: This service is disappointing → ?' The model immediately understands the expected pattern from the single example.

- Few-shot Prompting — You provide several examples (typically 3 to 5) to establish a clear pattern. More examples = better understanding of the format, style and expectations. This is the go-to technique for classification, extraction or generation tasks with specific formats. Note: too many examples can unnecessarily consume tokens; example quality takes priority over quantity.

- Role Prompting (Persona) — Assigning a specific role or expertise to the model before formulating the request. Example: 'You are a senior software architect with 20 years of experience in distributed systems.' This technique guides the language register, level of detail and perspective of responses. Often combined with other techniques. Research shows that highly specific roles (with years of experience, specialty, context) are more effective than generic roles.

- Instruction Prompting — Formulating explicit, clear and unambiguous instructions. Uses precise action verbs (analyze, compare, summarize, propose, list) and specifies the output format. This is the foundation of any effective interaction with an LLM. Best practices: one instruction per prompt, use delimiters (```, ---, ###) to separate context and instruction, specify what NOT to do in addition to what to do.

- Contextual Prompting — Providing rich and relevant context to anchor the model's response in a specific situation. Includes background, business constraints, target audience, available data. Well-defined context reduces hallucinations and increases relevance. Golden rule: only provide the necessary context — too much context can drown essential information and dilute response quality.

Prompt Engineering Trends in 2025

The field of prompt engineering is constantly evolving. Here are the major trends shaping the discipline in 2025 and beyond.

- Autonomous Agents and Orchestration — Prompt engineering is evolving toward designing agents capable of using tools, making decisions and executing complete workflows. Multi-agent systems (CrewAI, AutoGen, LangGraph) allow breaking down complex tasks among multiple specialized LLMs that collaborate. The prompt engineer becomes an architect of agentic systems.

- Reasoning Models — Models like o1, o3 (OpenAI) and Claude with extended thinking natively integrate step-by-step reasoning. This changes the practice: for these models, simpler and more direct prompts often work better than ultra-structured prompts, because the model handles the reasoning decomposition itself.

- Multimodal Prompting — Prompts are no longer just text. GPT-4o, Gemini and Claude accept images, PDFs, tables and soon video as inputs. Prompt engineering extends to the intelligent combination of modalities: describing an image to modify it, analyzing a chart with text instructions, transcribing and summarizing audio.

- Prompt Caching and Cost Optimization — With massive LLM adoption in production, cost optimization becomes critical. Prompt caching (Anthropic, OpenAI) allows reusing common prompt prefixes to reduce costs and latency. Prompt engineering now includes economic optimization: same results with fewer tokens.

- Automated Evaluation (LLM-as-Judge) — Using an LLM to evaluate the responses of another LLM is becoming the norm. Frameworks like RAGAS, LangSmith and Promptfoo automate the evaluation of relevance, factuality and coherence. The prompt engineer must master designing evaluation prompts as much as generation prompts.

- Prompt Security and Red Teaming — Prompt security has become a major concern. Prompt injection (manipulation of system instructions), jailbreaking and sensitive data extraction are real threats. Defensive prompt engineering — designing prompts that are robust against these attacks — is an increasingly sought-after skill.

Conclusion

Prompt engineering is much more than a buzzword: it is a maturing technical discipline that combines methodological rigor, creativity and deep understanding of AI systems. From fundamental techniques like few-shot prompting to advanced approaches like ReAct and autonomous agents, the realm of possibilities keeps expanding. Whether you want to optimize your personal productivity, build AI applications, or make prompt engineering your career, the skills covered in this guide give you a solid foundation to excel. The Art of Prompting provides hands-on exercises, a prompt builder, and optimized templates to put these techniques into practice today.

Related Prompts

Design an Application Caching Strategy

Design a complete Redis caching strategy with appropriate patterns, TTL policy, invalidation, and stampede protection.

Source Code Security Audit

Audit your code security according to the OWASP Top 10 with vulnerability identification, exploitation PoC, and fixes.

Complete Code Review for Pull Requests

Get an exhaustive code review covering quality, performance, security, and maintainability for any language.

Refactor Legacy Code Step by Step

This prompt guides AI to analyze legacy code and produce a structured refactoring plan with diagnosis, prioritization, tests, and modernized code.

Analyze my website's SEO performance

This Cowork prompt transforms your raw Google Search Console exports into a structured, actionable SEO audit. Claude identifies quick-wins (pages in position 5-20 to optimize), problems (cannibalization, declining pages) and generates recommendations prioritized by impact and effort. The CSV tracking table enables continuous monitoring.

Design a Robust Microservices Architecture

A complete prompt to design a professional microservices architecture covering DDD decomposition, inter-service communication, Kubernetes deployment, and observability.

Practice Exercises

Few-shot Prompting

Teach by example: guide the AI with concrete examples.

Structured Output / JSON

Get structured JSON responses that can be processed by code.

System Prompt Design

Design a complete system prompt for a professional chatbot.

Chain-of-Thought Reasoning

Force the AI to reason step by step for complex decisions.

Self-Consistency Prompting

Get reliable answers by cross-referencing multiple perspectives.

Mega-Prompt

Create a complete mega-prompt for a specialized AI assistant.

Continue your learning

You've finished this guide. Here's how to go further.

Practice what you learned

Interactive exercises to sharpen your prompting skills

Get new guides every week

Join our newsletter and never miss new content.